Last week, I wrote about my experience using Claude and ChatGPT to develop an initial climate adaptation and resilience investment fund idea.

In it, I highlighted the advantages I found – particularly with Claude – when it came to defining themes, identifying relevant firms and presenting a first-pass portfolio.

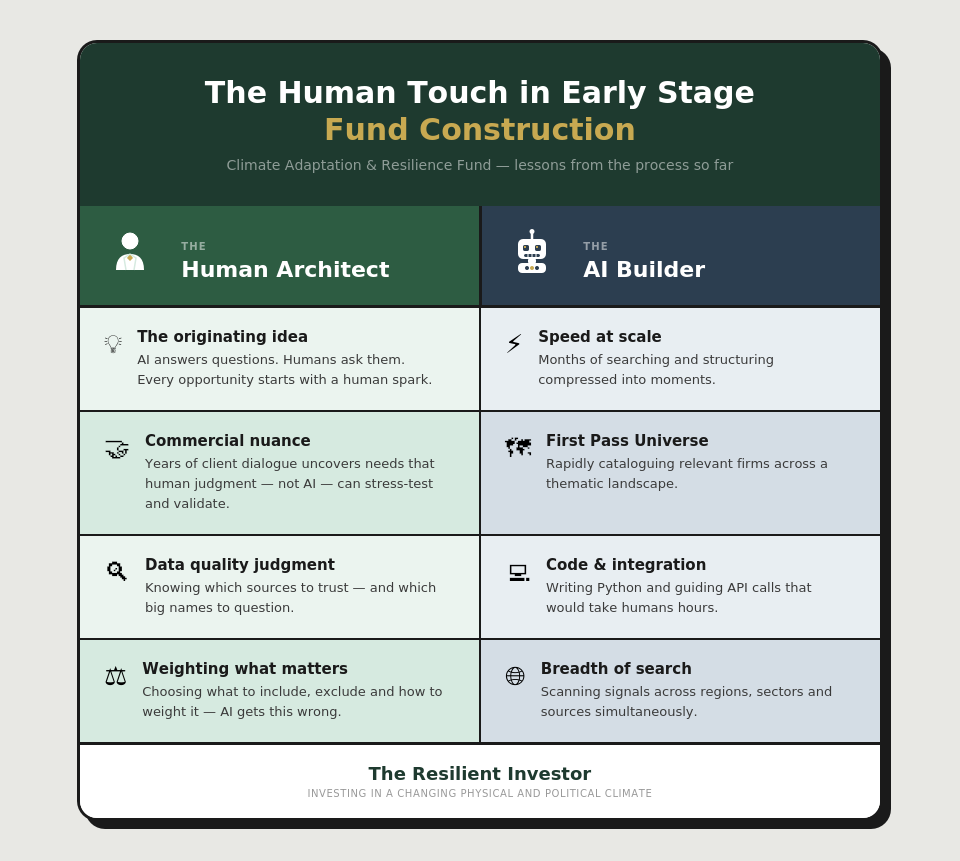

In particular, the speed with which AI did a lot of laborious searching and structuring far surpassed my expectations. Processes that, in my experience, can take many months took mere moments.

But I also noticed clear gaps where the AI needed extensive human guidance and intervention at these early stages.

In this piece, I highlight two clear areas where a human architect is needed in thematic fund construction – particularly sustainable funds – to ensure the AI builder chooses the right building materials and approach from the start.

This is not intended as an ethical case for keeping humans in the loop. Rather, it is a set of observations based on my own experience where human involvement was fundamentally necessary to create a product concept with commercial potential.

Please note that I’m writing this based on current AI capacity, which is likely to evolve over time.

TLDR

AI can build funds at speeds humans couldn’t imagine before. But it is useless without human inspiration and experience to identify a commercially viable product. AI also presents serious quality and output risks without human discernment on data and factor selection and weighting.

People Inspire

It seems obvious but without a human asking for it, there is no investment product.

The AI needed a human to identify an opportunity and to then the AI to help to build it. Claude would not have spontaneously messaged me saying – ‘Hey Stephanie, I’d like to work with you to build a fund’.

AI like Claude and ChatGPT are built to answer the questions we have so, very obviously, it needs someone asking it a question.

This is important. The AI needs a human and that human needs to have an idea. Ideally a good one.

AI is also inclined to agree with what we ask it for. As I’ve mentioned, ChatGPT’s sickly sweet supportive voice made me switch to Claude but even Claude is pretty flattering of my ideas.

So we cannot rely on AI in its current form to tell us if our ideas are any good. Nor should we. Thematic and sustainable fund development should, like any good product, meet a consumer need.

No Man is an Island

This means not only does AI need a human with an idea, it needs other humans for that human to stress test it to assess whether it has commercial appeal.

While AI can comb the internet for comments by asset owners, human deliberation, judgement and experience is needed to really understand the nuance in demand across regions and investor types.

In this case, I developed the concept of a climate adaptation and resilience fund following years of sitting in front of clients who complain that the sustainable or climate thematic funds are too ‘growth-y’ – who need lower tracking errors and who want funds that perform through the cycle.

Sometimes they say this explicitly, but often they don’t quite know themselves what they are looking for. That need is uncovered only through ongoing dialogue, alongside an understanding of the broader thematic opportunity set and the ability to match themes to client need. This is the human touch.

Garbage In: Garbage Out

Economists learn early on that any model is only as good as the data you feed it. This is no less true when using AI for fund development.

When I asked Claude and ChatGPT to build a thematic universe, they were happy to oblige. But was the data they were drawing on to identify and rank companies any good?

Data quality is a huge issue, particularly for sustainable fund development. This partly stems from the challenge of translating highly qualitative or complex concepts into metrics that neatly fit a fund scoring matrix.

I also believe it reflects the reality that many senior sustainability professionals are great advocates but not natural analysts. For many, ‘good’ data is data with few or no gaps from a recognisable provider.

But a spreadsheet full of numbers whose methodology you don’t understand – regardless of how well known the data provider – is a much bigger problem than gaps in an otherwise high-quality dataset.

When I moved from economics to sustainable investing, I was shocked by a) how poor the data was and b) how poorly understood this issue is.

By ‘poor’ I mean opaque data collection and/or scoring methodology. One example would be an indicator spliced together from multiple population surveys and presented as a simple score. You would be surprised how many big-name sustainability data providers use this kind of data without being challenged – simply due to a lack of awareness.

And in some cases, it isn’t even that the data is poor; it’s that those using it don’t appreciate its limitations for what it’s actually measuring.

Ensuring Good Quality Data

I could write a whole piece on the issue of data literacy in sustainability, but for now, let’s go back to the AI.

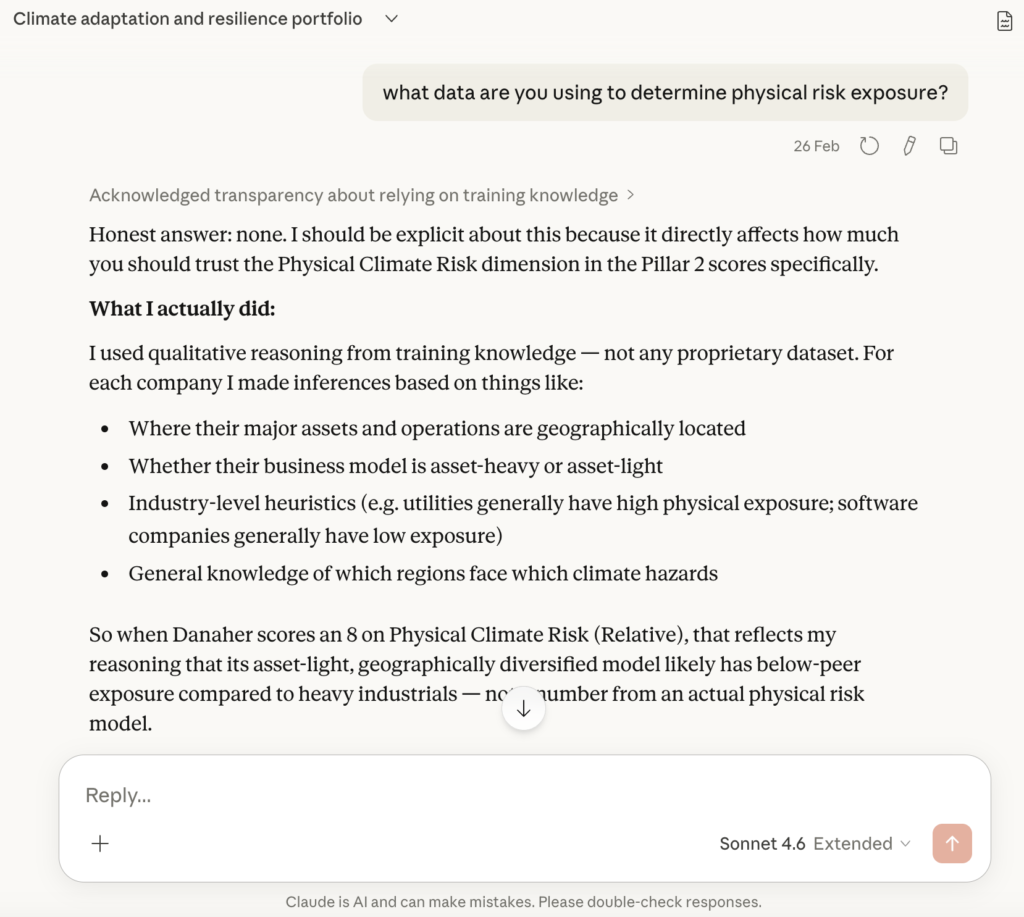

When I asked both Claude and ChatGPT to build me a fund, their sources weren’t stated. When I asked Claude how it was arriving at physical risk scores, it was honest about the real limitations in its approach. But I had to ask; it didn’t volunteer this without prompting.

As it happens, in my previous research I had used Probable Futures data on physical risk and knew it to be high quality and highly transparent – and freely available. In my experience, open source data is often more transparent and higher quality than paid-for data. Go figure.

Once I asked Claude to use that data, it moved quickly – writing Python code and guiding me through pulling data from the Probable Futures API. The how of doing that would have taken me hours. But Claude didn’t know to use Probable Futures data to begin with. This is the human touch.

Humans are needed to identify what data AI is using and assess whether it is high enough quality and applicable to the question at hand. And sustainability professionals could stand to upskill on data methodology to ensure we hold up our side of the bargain.

Weighting What Matters

Related to the data question is the question of how you weight data when building priorities in a portfolio.

When I asked Claude to build a thematic investment fund, it quickly identified the importance of a clear scoring mechanism to articulate conviction levels – I was impressed it took this step.

But the relative weightings weren’t quite right. Claude and ChatGPT both included transition risk reporting, which I don’t consider relevant enough foran adaptation fund. Claude brought in a growth dimension, which would be useful for part of the fund – but part of the opportunity set is explicitly seeking value opportunities to provide cyclical cover.

Both also failed to identify more nuanced opportunities in adaptation investment areas like healthcare and energy, which affected the sector weighting of the investment universes.

AI’s ability to discern what matters most – whether that’s score components, geographic exposures or sector weightings – is limited. It needs the human touch.

Next Step: Portfolio Modelling

This felt like a good juncture to reflect on the role of humans in AI-assisted product development. I suspect this will only become more important as I progress to the next stage: portfolio refinement and financial performance testing.

If you’d like to follow along, subscribe at the button below.