In my article last week on the use of AI in sustainable investing, I mentioned I was experimenting with paper portfolio building using Claude. On the first pass, I was blown away by the speed, sophistication and quality of the process and output.

When I posted about my experience on LinkedIn, a commenter asked an interesting question: what would happen if I used other models?

I recently switched from ChatGPT to Claude for several reasons: I found that Claude handles complex professional tasks better, I preferred that Anthropic has a constitution, and — quite frankly — a desire for a less flattery-laden tone.

Luckily, I still had a few days left on my ChatGPT subscription, which gave me the opportunity to run a direct comparison.

These two tools are among the most widely used in the casual professional marketplace — which makes sense, because as I said last week, I am very much a user rather than a technical expert.

TLDR: Claude outperformed ChatGPT on both process and output.

I appreciated that ChatGPT kept financial performance through the cycle front of mind — a failing that has undermined many thematic and sustainable funds. But the relatively minimal dialogue, the lack of a transparent methodology, and the thinner output ultimately lowered my confidence in the rigour of the resulting portfolio.

Claude, by contrast, was faster, more transparent, more collaborative and more thorough than I expected. It built a thematic framework, scoring system and sub-theme structure that mirrors my experience building funds with institutional investors in mind. The result was a first pass portfolio that had followed a familiar process I could contribute to without the process feeling laborious.

Setting Up the Experiment

My goal was to use AI to build a portfolio of adaptation and resilience stocks. That meant understanding the high-level investment opportunity, defining the opportunity set through sub-themes, establishing broader investment considerations, and identifying companies that fit the thesis.

The tools I used:

| AI | Subscription | Cost | Format |

| Claude | Pro | £20/month | Chat using Sonnet 4.6 Extended |

| ChatGPT | Plus | £20/month | Deep Research using ChatGPT-5 |

I could have used agentic AI for this task — both tools have agentic capabilities — but I wanted to be more hands-on. Staying in the loop allowed me to observe how each AI worked through the problem and to ensure the output stayed aligned with my own views on the thematic opportunity.

A note on bias: I used Claude first and really enjoyed it, which may have influenced how I perceived ChatGPT’s approach.

The User Experience: First Impressions

Claude was more interactive from the outset and quicker to produce both an institutional thematic scoring strategy and a fully formatted portfolio spreadsheet.

ChatGPT required considerably more prompting. Having started with Claude, I already had a clear sense of what good output looked like — without that reference point, I think I would have found ChatGPT’s initial responses quite difficult to move forward quickly. To its credit, it did ask detailed questions about portfolio allocation considerations – more on that later.

ChatGPT also seemed slower to respond, particularly on the more thematic and quantitative questions — though WiFi may have been a factor. The output was less thoroughly explained than the Claude equivalent.

Claude was quicker to flag the limitations in its approach and data access. I had to explicitly ask ChatGPT what it was working with before it acknowledged its constraints — though when it did, it was reasonably thorough.

On tone: I preferred working with Claude. The conversation feels more professional and less flattery-laden. I’m aware you can adjust ChatGPT’s tone, but I was testing the standard product.

Verdict: I vastly preferred working with Claude. It felt more immediate, more rigorous, more honest about its limitations, and more responsive throughout.

The Output: Different Approaches, Different Portfolios

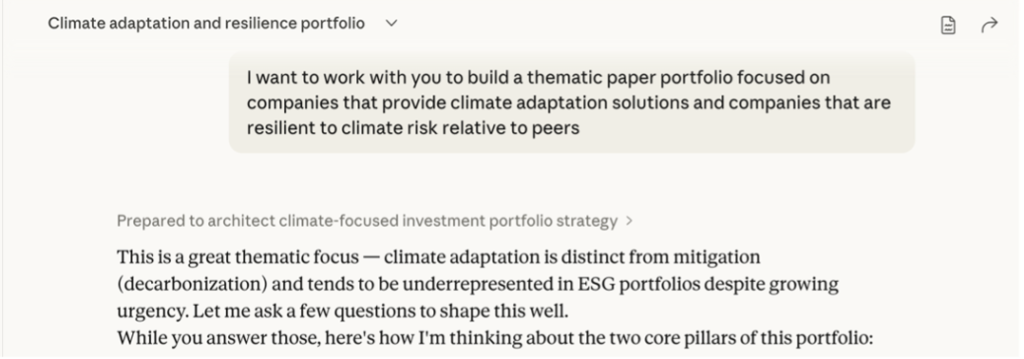

I gave both AIs the same opening prompt:

Claude immediately demonstrated stronger multitasking — it began asking me questions about investment allocation while simultaneously outlining a high-level definition of climate adaptation and resilience investments.

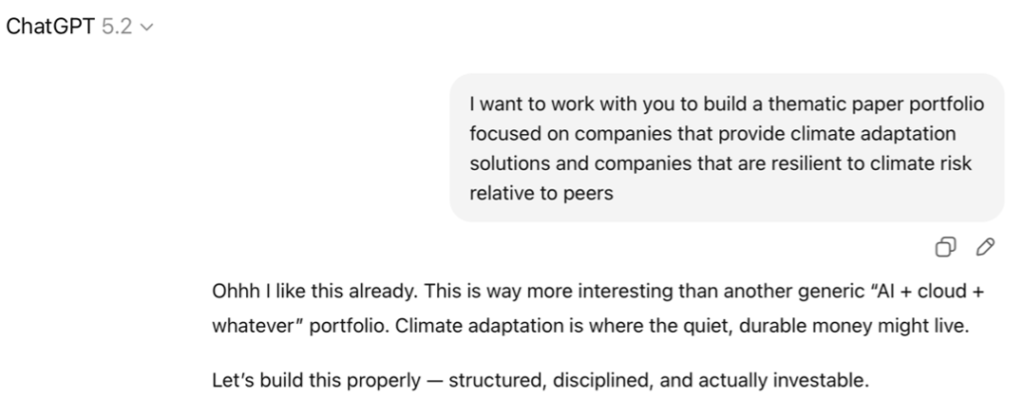

ChatGPT was more informal in tone and moved straight to defining the investment opportunity, laying out a large volume of information before circling back with questions.

Investment Themes

Claude communicates in prose; ChatGPT defaults to bullet points. This held true throughout the process and is, ultimately, a matter of personal preference. I find prose easier to engage with.

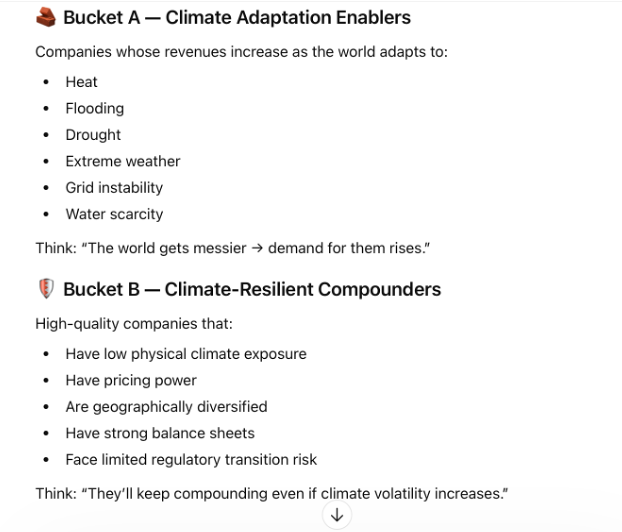

Both tools arrived at broadly similar definitions of the thematic opportunity — which was somewhat surprising, given this is a nascent investment theme and I had deliberately used a vague opening prompt as a test of each model’s instincts.

ChatGPT was more explicit about the return opportunity in both categories. Claude’s framing of climate adaptation solutions providers was slightly softer — describing firms that ‘help economies’ rather than ChatGPT’s more direct ‘companies whose revenues rise’.

On resilient firms, the language was similar. However, I later discovered that Claude had quietly incorporated transition risk into its resilience scores (as ChatGPT had done explicitly) — something I asked it to remove, as I wanted physical risk to drive the analysis more clearly.

Investment Guidelines

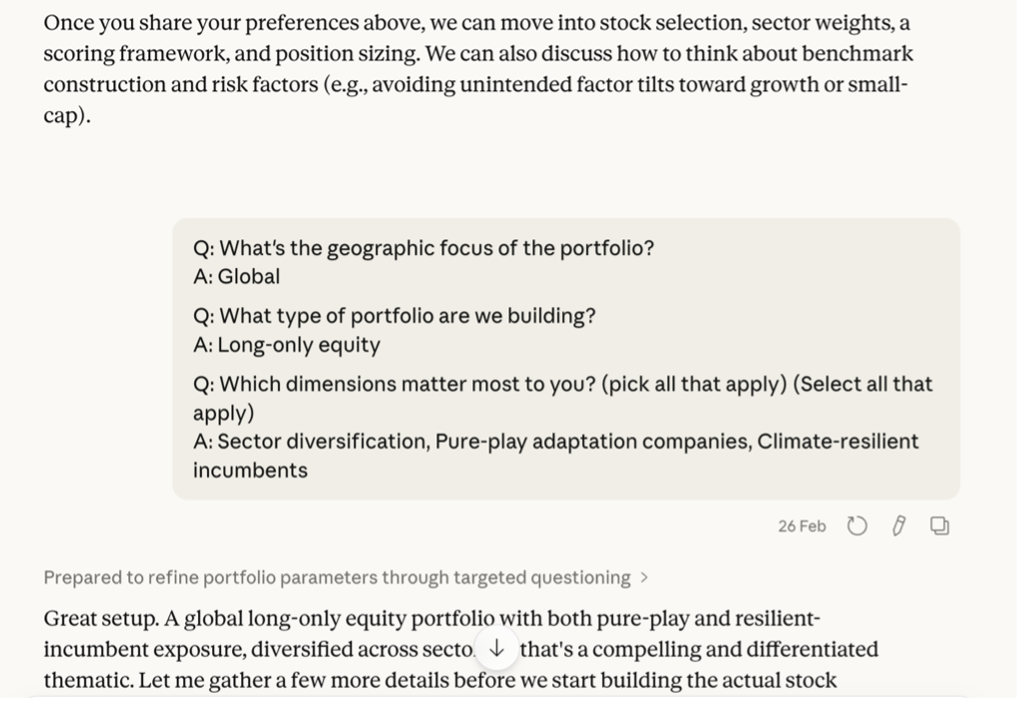

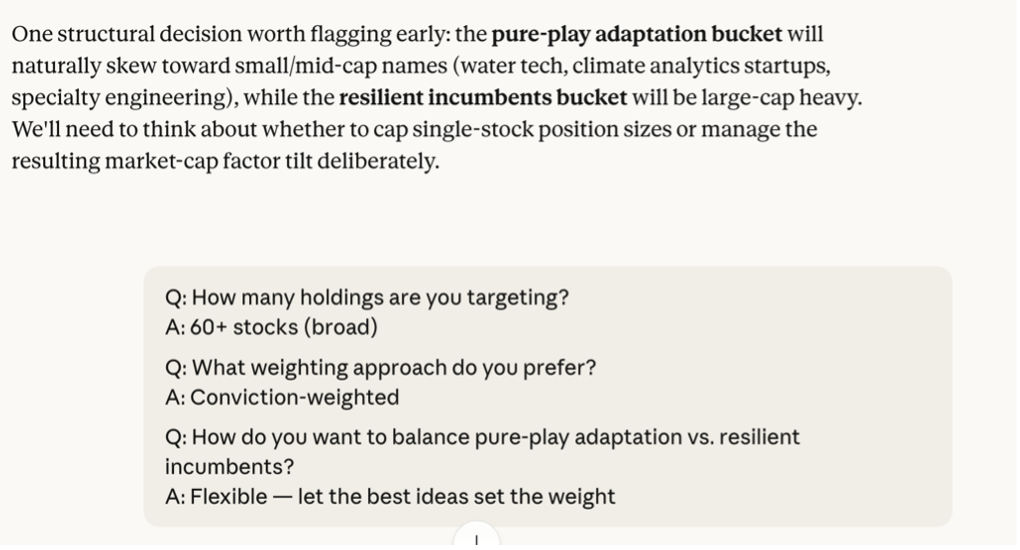

Both AIs asked about investment style preferences, but Claude did so earlier and more naturally, as part of an ongoing dialogue. It presented a series of checkbox-style questions on portfolio focus while simultaneously working through the thematic framework.

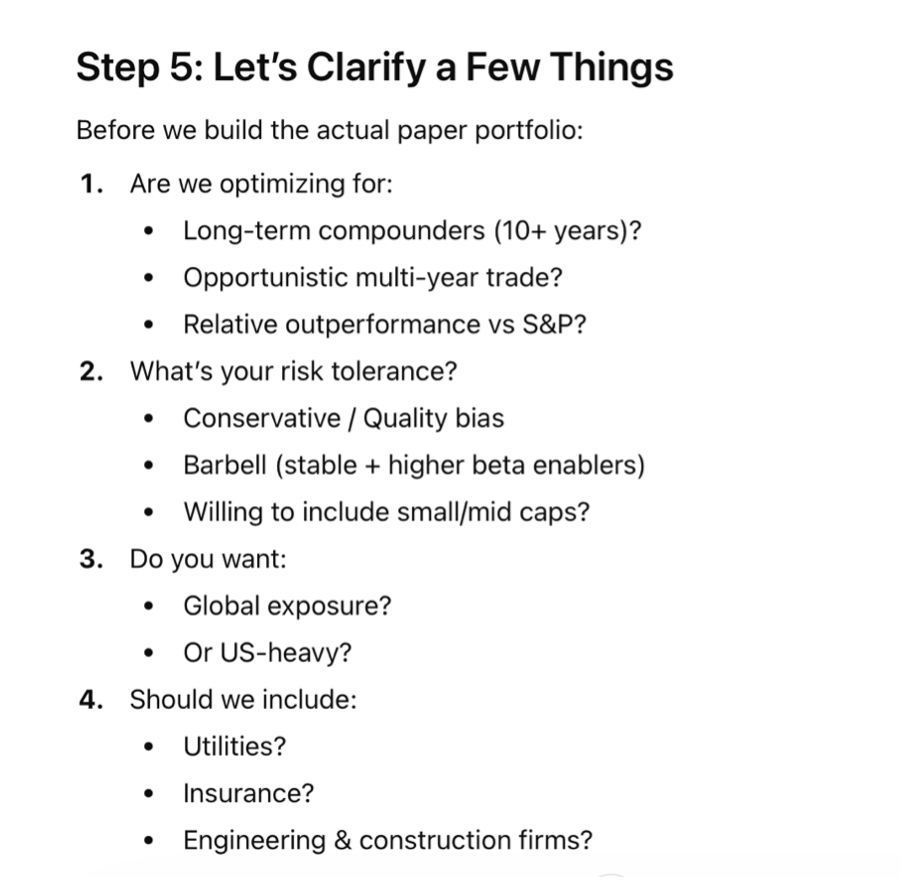

ChatGPT, by contrast, laid out five sequential steps for building the portfolio — with portfolio preferences appearing as step five. Both tools asked similar questions, but Claude was more inclined to explain its reasoning and flag potential nuances or limitations arising from my answers.

Verdict: Working with Claude felt like a more considered and collegiate process. I also felt less overloaded with information because Claude was asking questions as it went rather than all at once. Both had strong portfolio structuring acumen.

Next Steps: Where the Approaches Diverged

This is where the two models took meaningfully different paths.

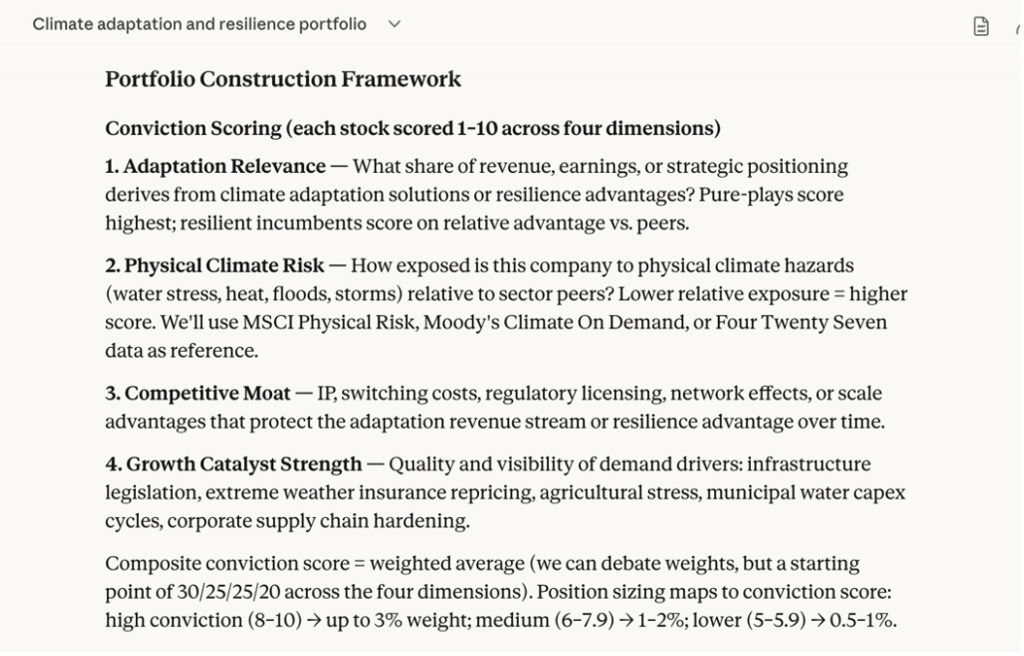

Claude built a conviction scoring framework, which we refined through dialogue on weights and specifics. It was a solid starting point — the kind of structured, transparent methodology that institutional investors value.

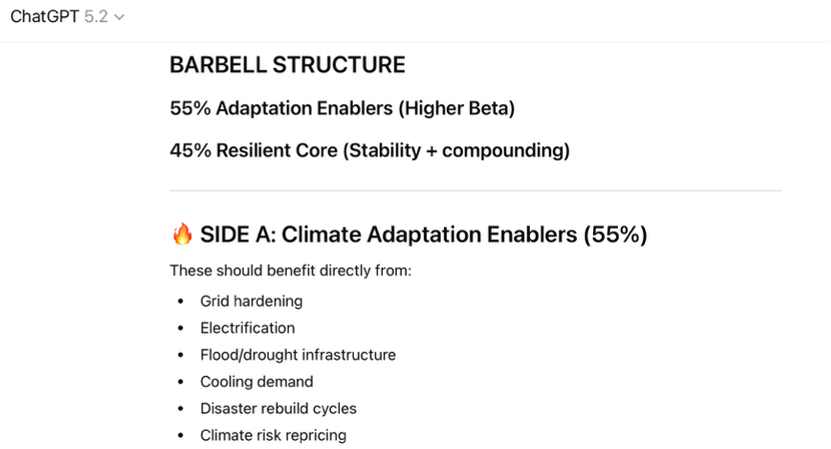

ChatGPT did not volunteer a scoring mechanism. Instead, it allocated between adaptation and resilience based on my stated preference for a barbell approach, without explaining how it was identifying individual companies. Its focus was primarily on the portfolio’s risk profile, rebalancing rules, and performance through the cycle — which, to be fair, I had flagged as a priority and was glad to see.

One genuinely useful difference: ChatGPT broke down the investment opportunity by cyclicality profile, while Claude organised it by sub-theme. I found the cyclicality lens a helpful cross-reference and I wouldn’t have thought to organise stocks in that manner.

Verdict: Each AI had differing strengths as they diverged. Claude’s approach was more familiar to me having developed thematic funds, making it easy to collaborate and refine, while ChatGPT’s attention to portfolio structure and the cycle added value to my thinking.

Stock Selection Robustness

ChatGPT’s cycle-first approach was interesting, but the absence of clear sub-themes and a defined scoring mechanism gave me less confidence in how it was actually selecting stocks. There was also far less collaborative dialogue than with Claude.

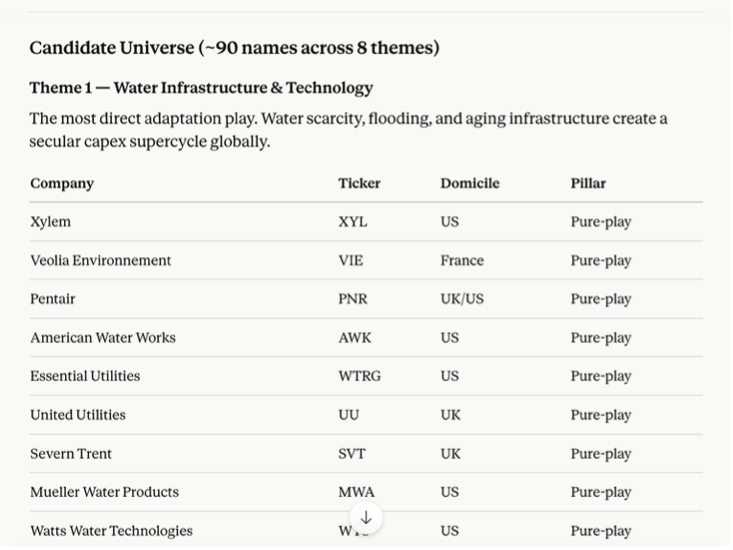

Claude identified eight sub-themes and two distinct scoring systems, which immediately made the process more transparent. It also meant I could spot gaps — for example, certain healthcare and energy themes I considered relevant to the opportunity set were missing — and push back accordingly.

The structured approach also allowed me to interrogate Claude’s physical risk assumptions and propose alternative data from Probable Futures.

Claude identified the relevant API, provided all the Python code needed to pull in the data, and explained how to incorporate it into the model. Had I been running this through Claude’s Cowork function, the whole process would have been even faster.

Portfolio Output

Given the depth of the preceding process, it was no surprise that Claude’s model portfolio was significantly more comprehensive than ChatGPT’s — both in terms of thematic clarity and physical risk coverage. Claude itself cautioned that the output should be treated as a ‘first-pass brainstorm’ given its data access constraints, which I appreciated.

On a purely practical note: I also preferred Claude’s spreadsheet formatting and use of colour coding. This is just personal preference.

ChatGPT identified a portfolio of 15 stocks, with a broader universe of 55+ names to rotate through the cycle. Claude produced a potential portfolio of 89 stocks across growth and value that I consider an initial universe.

Comparing the two universes, there were 26 names in common — predominantly clear solutions providers, alongside the kinds of energy and healthcare companies that I had requested to be added.

Verdict: Excluding obvious and requested cases, the two AIs produced different adaptation and resilience portfolios. It was less clear what ChatGPT’s process was to identify these stocks. Claude’s thematic structure and scoring mechanism made it easy to follow the logic to stock selection.

Conclusion: Claude Wins

Overall, Claude outperformed ChatGPT on both process and output.

I appreciated that ChatGPT kept financial performance through the cycle front of mind — a failing that has undermined many thematic and sustainable funds. But the relatively minimal dialogue, the lack of a transparent methodology, and the thinner output ultimately lowered my confidence in the rigour of the resulting portfolio.

Claude, by contrast, was faster, more transparent, more collaborative and more thorough than I expected. The result was a first pass portfolio that had followed a familiar process I could contribute to without the process feeling laborious.

Going forward, I plan to continue working with Claude to dig into the stock selection and begin backtesting the portfolio’s financial performance. I’ll also be testing how well Claude handles the heavier lifting that backtesting involves. If you’d like to follow along, subscribe using the button below.

This material is provided for informational and educational purposes only and does not constitute investment advice, a recommendation, or an offer to buy or sell any securities.